In this current age of data-rich analytics, it is imperative to decode immense quantities of information in intricate state dossiers to gain a business competitive advantage. Historical techniques of interrogating data catalogs require technical competencies and querying languages that are not accessible to all. Here is where having an AI agent that can navigate your data catalog through the use of natural language processing (NLP) can make all the difference.

A chatbot with human-like language comprehension enables its users to access information in a natural way, ask questions, and get answers without possessing any specific knowledge. This article will show you the advantages of such an AI agent, its essential features, and how to effectively assemble one with all the important aspects of keyword search so that it can be convenient and efficient to adapt to.

So What Is an AI Agent to Data Catalog Exploration?

Comprehending the Place of an AI Agent

An AI agent is a computer program that can do tasks and communicate with humans in a human-like way without being directly controlled by people. When combined with the exploration of a data catalog, this form of artificial intelligence is an interface agent that can parse, process, and access relevant information across large and heterogeneous repositories, since it interprets the queries of users in natural language.

The strengths of having an AI agent in data management.

- End-user friendly: it allows users to analyze that information without complicated query languages.

- Efficiency: Accelerates data search through real-time answers to queries.

- Contextual Insight: Uses a natural language processor to understand the purpose of a user question.

- Increased Collaboration: Various groups can draw insight on their own without the regular intervention of a data specialist.

Technologies used in the development of the AI Agent

Natural Language Processing (NLP)

Natural language processing forms the content of the AI agent. NLP allows the agent to understand and decode human language, be it through writing or talking, through the breakdown of the context and the structure of the input queries. The technology enables the system to:

- Learn nuances of phrasings and variations, and synonyms.

- Extract the intent and the entities of questions.

- Translate natural language to structured requests that will extract the data in the catalog.

Data Catalog Integration

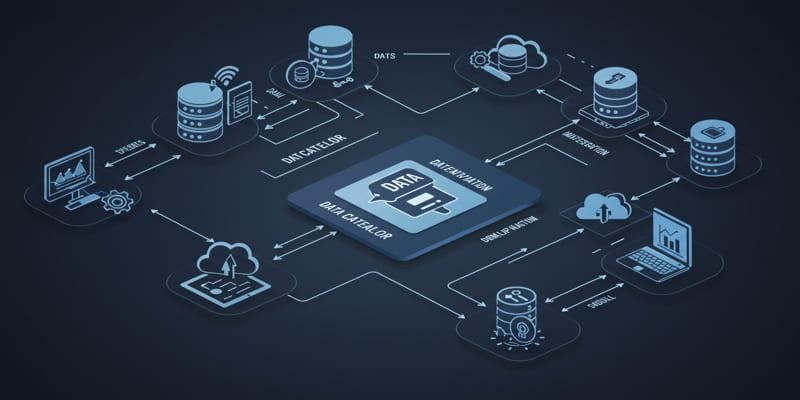

A data catalog is a collection of metadata, schemas, and business context of all datasets that are offered by a given organization. Tight integration of the AI agent with the data catalog will make sure:

- Uninterrupted access to various data.

- Relevance of returned results with context.

- Uniform information management in the course of exploration

Constant use of Keyword Research and Optimization

Incorporation of continuous keyword research in the AI agent development will help determine the most common terms and phrases that users are using when searching to find data insights. This infusion of keyword knowledge ups the AI listening and responsiveness by:

- Refreshing vocabulary and search intents on the agent on a regular basis.

- Enhancing the effectiveness of user queries, data asset exactness matches.

- The ability to adjust to the evolving user behavior and new topics on a real-time basis.

The Process to Create Your AI Agent to Explore the Data Catalog

Step 1: Clarify Your Use Cases and User Personas

Determine major use cases before creating your AI agent, such as business analysts who need fast insights or data scientists to probe data. Knowing the personas of the users helps learn to customize the language models and responses of the AI.

Step 2: Catalog, Store, and Index Your Data

Make sure your data catalog is detailed, properly structured, and has lots of metadata. These preparations entail:

- Listing of all data with proper description.

- Labeling data with related keywords and subjects identified by keyword research.

- Setting up access regulations and security.

Step 3: Select NLP Framework and AI Models

Choose the well-advanced NLP frameworks that can comprehend complicated queries in natural language. Well-known frameworks are described as:

- Language understanding using the OpenAI models or their analogues (GPT).

- Models trained and customized with industry-specific jargon and dataset descriptors.

Step 4: Work on the Conversational Interface

Create an interface (chatbot or voice assistant) on which a person can ask a question in his/her own words. One should presume that this interface would not look askew, as it is a two-dimensional representation.

- Be able to further the dialog, so you can ask questions/recap.

- Give suggestions to incomplete searches, using keyword trends.

- Be able to present data knowledge graphically when presenting results.

Step 5: Noticeable query processing and response mapping

Auto-transform natural language into structured queries that can run on your data catalog back end. The NLP algorithms analyze the intent and pull out parameters that are required, and then:

- Launch exact data inquiries

- Displays results clearly with any explanations or visualizations that may need to be made.

Step 6: Test, Train, and Polish with real user data

Use real-life interactions with users to guide the AI agent to learn more and more. Track measurements of performance based on accuracy, response time, and end-user satisfaction. Include regular keyword research to make the AI aware of the new terms and patterns of search activity.

Best Practices on Improving the AI Agent to Include Keyword Research

Minimize Language Awfulness and Intent

Through studying those words and phrases that the users most often apply, the AI agent can be instructed to understand different formats of the same question. This eliminates miscommunication and improves accuracy in accessing the relevant data in the catalog.

Semantic Keyword Grouping

In group-related keywords semantics, keywords were not isolated but were used in a group sense. As an example, the AI agent must learn that data inventory and data catalog are similar concepts. Such semantic understanding is important in coming up with an AI agent that actually talks the language of the users.

Avoid Keyword Stuffing in order to retain naturalness.

Strike a balance between using SEO keywords and preserving readability and the conversational, casual tone that users expect an AI assistant to have. The integration of the keywords must also be smooth so that the AI agent does not come out as mechanical and repetitive.

Conclusion

Creating an example, such as an AI agent that uses natural language processing to explore your data catalog, is a paradigm-shifting moment on the way to democratizing data access in your organization. It removes barriers between complicated data repositories and users by allowing interaction in a way that people can understand in a common language.

Not only does this streamline the workflows of analysts and business users, but it also ensures that decisions are made based on timely and data-driven insights. Then there is the ongoing keyword research, which adds a level of responsiveness to the AI that truly makes it an intelligent assistant as it learns your data ecosystem.