AI development doesn't end at deployment. Releasing a model into production is a transition, not a conclusion. The behavior of a model in the real world often reveals patterns, edge cases, and failures that controlled environments can't anticipate. Initial benchmarks may indicate strong performance, but these numbers rarely hold steady once the model encounters fresh data streams, changing conditions, and evolving user behavior. Without continuous evaluation, system reliability erodes over time. Long-term performance depends on detecting when the model starts drifting from its intended output or when external dynamics render its training data less representative. Active monitoring makes this possible.

Real-World Data Always Shifts

Real-world data doesn’t sit still. Models trained on historical data are quickly tested when exposed to live environments. A language model that once responded well to tech support queries might start to falter as software updates introduce new terminology. In healthcare, a model trained on pre-pandemic symptoms might misinterpret patterns from a new virus. Financial models built around old economic conditions can become blind to sudden market shifts.

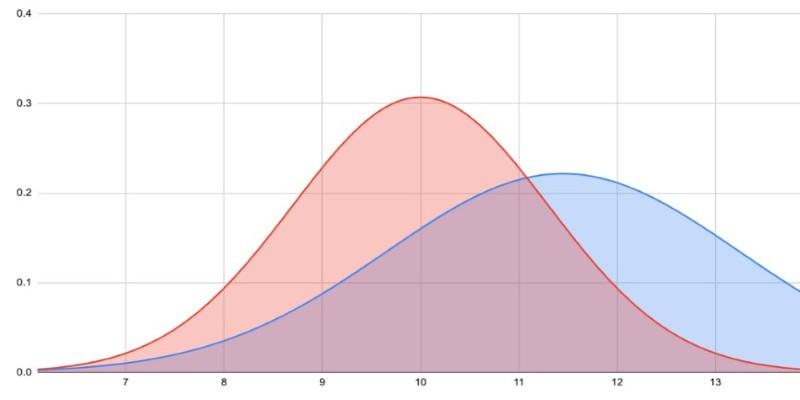

This isn’t a failure of design. It’s a natural result of change. As data landscapes evolve, so do the demands placed on deployed systems. But unless teams track how incoming data is shifting, models start to fall behind. This is data drift—when a model's assumptions no longer fit the world it’s working in.

One-off evaluations miss this. Without real-time comparisons against updated ground truth, there's no early warning. The system appears fine—until performance drops hard. Teams need ways to log predictions, capture user signals, and regularly align performance metrics with the current environment. Otherwise, the model doesn’t just degrade—it does so silently, and by the time issues surface, recovery is more expensive than prevention. Continuous evaluation is the only way to stay ahead of that curve.

Evaluation Metrics Don’t Always Reflect Reality

Evaluation during development tends to be neat and structured. You’ve got benchmark datasets, cross-validation, and a sense of control over inputs. But once a model is out in the real world, that structure falls apart. People don’t use products in predictable ways. In a recommender system, just putting the model in front of users can start changing their behavior. They begin responding to suggestions, which in turn shifts the data being collected. That feedback loop never shows up in pre-launch metrics.

There’s also the issue of oversimplified indicators. Accuracy might look solid in testing, but it tells you nothing about fairness or how the model performs across less-represented groups. A model could deliver high precision overall while quietly underperforming for certain segments. That becomes hard to detect unless you’re tracking how performance breaks down across different user populations.

To really understand what a model is doing, you need more than aggregate metrics. You need ongoing audits, adversarial tests, and targeted reviews of edge cases. Segment-level tracking should be built into the monitoring stack. Without it, you’re flying blind. And once a problem does show up, it’s usually already affecting users. Quiet failures don’t stay quiet for long.

Model Drift and Degraded Reasoning Over Time

Model drift isn’t limited to data input. The model's internal logic can degrade as it interacts with a system that reshapes the context around it. In large language models, for instance, hallucination rates may increase with different prompt structures or domain-specific queries that weren’t present in training. The shift in user inputs or broader API usage can expose areas of brittle reasoning.

Time compounds this. In operational systems, small misalignments accumulate. A vision model may become less effective due to lighting conditions in newer camera hardware. Anomaly detection tools may degrade as seasonal patterns evolve or infrastructure changes. Even with static parameters, model effectiveness isn't frozen. It interacts with an ecosystem that’s always in motion. Long-term reliability depends on spotting gradual change.

That requires a mix of logging outputs, capturing failure patterns, and measuring inference results against updated baselines. In practice, this means implementing shadow evaluation setups, where newer versions run silently alongside the deployed one, and comparing their outputs before switching over. It also means validating assumptions—what used to work well might not anymore.

Governance, Compliance, and User Trust Require Transparency

One-time testing doesn’t cut it in high-stakes environments. Financial platforms, healthcare systems, and government services all operate under strict oversight, and AI models used in these spaces need to show not just that they worked once, but that they continue to behave as expected over time. Without clear records showing how outputs change and where issues emerge, organizations risk regulatory violations and public backlash.

But it’s not just about rules. Trust breaks down fast when people notice things slipping. A tool that once gave relevant answers but now feels out of step loses its edge. Doctors stop relying on systems that misread edge cases. Users ignore suggestions that seem off. Inconsistent behavior signals a system that's no longer dependable. Keeping that trust means checking in regularly.

Not just on averages, but on what’s happening at the margins—where users notice first. That might include structured feedback channels, built-in review steps, or even running updated models quietly in the background before replacing the old ones. It’s not easy to set up, but it's necessary. Models aren’t static assets. They live in changing environments, and staying useful means paying attention after launch, not just before it.

Conclusion

Deploying an AI model is only the beginning of its real-world journey. What looks solid in testing may not hold up under changing conditions or shifting data. Continuous evaluation helps teams see where things are drifting, where metrics no longer reflect quality, and how users are adapting in ways no benchmark could predict. It keeps systems aligned with their original intent and ensures they stay grounded in reality. Waiting for failure to appear before checking performance costs more than staying vigilant. Long-term reliability, safety, and trust depend on the habit of reassessing models after they go live.