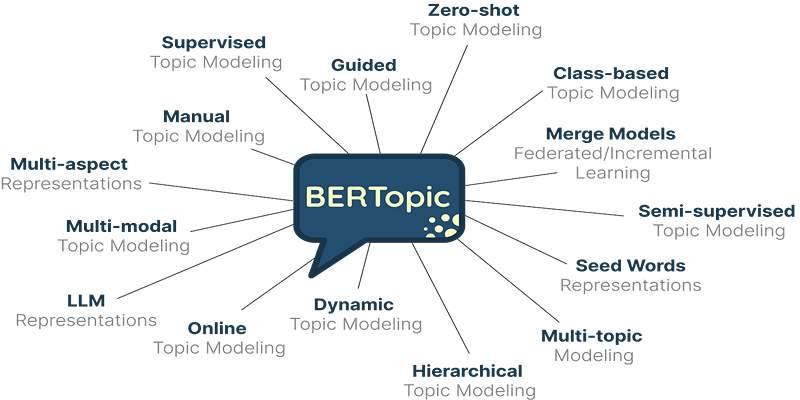

BERTopic blends modern embeddings with clustering and class-based TF-IDF to produce topics that read like short summaries instead of opaque vectors. Documents are embedded, similar items are grouped, and distinctive terms are scored to label each group.

The approach is flexible enough for varied corpora, yet it still benefits from a thoughtful setup. This guide walks through the moving parts, shows how to tune them without heavy ceremony, and shares habits that keep topics stable, useful, and explainable over time.

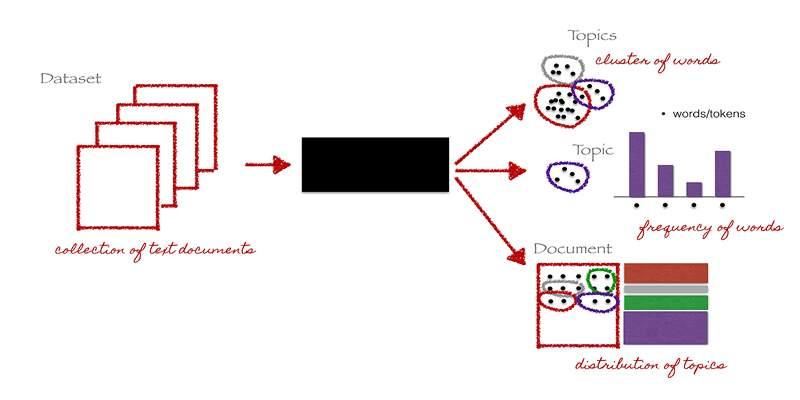

How The Pipeline Fits Together?

A sentence encoder converts each document into a dense vector. A reducer such as UMAP maps those vectors into a space that preserves local neighborhoods. HDBSCAN then finds dense regions and marks outliers as noise.

Finally, class-based TF-IDF scores terms that distinguish each cluster from the rest, which becomes the topic label. That last step matters because it favors words and short phrases that are common inside a cluster and uncommon elsewhere, giving labels that people recognize.

Prepare Text With A Light Touch

Heavy cleaning can erase signals that make clusters meaningful. Lowercase text, standardize quotes, and remove boilerplate that repeats across documents. Keep emojis and punctuation that carry tone or domain hints. Preserve multi-word expressions when possible, since c-TF-IDF gains power from meaningful phrases.

Be cautious with lemmatization that collapses technical terms into bland stems. Treat stopwords as a dial, not a switch, especially when documents are short and need function words to anchor meaning.

Choose Embeddings That Fit The Corpus

General English encoders work well for broad datasets. Domain encoders often yield tighter clusters for technical, legal, medical, or multilingual collections. Pick a model that matches expected language and jargon, then keep that model fixed through a round of experiments so comparisons remain fair.

Batch embedding to control memory, cache results on disk, and log the exact model name or hash in your run notes. When documents vary a lot in length, consider averaging sentence embeddings per document so long sections do not dominate.

Tune UMAP And HDBSCAN Without Guesswork

UMAP’s neighbors and min distance control how tightly local groups hold together after projection. HDBSCAN’s minimum cluster size and minimum samples decide how big and how dense a group must be to count as a topic. Start from sensible defaults, then change one knob at a time.

Watch two views while you tune, a scatterplot of the reduced space colored by cluster, and a bar chart of topic sizes. Aim for coherent islands and a size distribution that avoids a long tail of singletons that drown dashboards in noise.

Make Topic Labels People Trust

Class-based TF-IDF builds labels by comparing term frequencies inside a cluster to frequencies in the rest of the corpus. Improve labels by allowing bigrams and trigrams, filtering token patterns that add noise, and capping overly common words that flood many topics.

Read a handful of documents from each cluster and check whether the top terms actually describe what you see. When a topic feels broad or muddled, try raising the minimum cluster size or merge it with its nearest neighbor after checking content overlap.

Reduce And Merge Similar Topics Carefully

Topics often arrive in families that differ by small wording choices. A reduction pass can merge near duplicates by comparing topic representations and joining very similar pairs. Keep this step conservative and review the largest merges by hand.

If two topics overlap semantically but serve different audiences, keep them separate and annotate labels so users understand the distinction. A few careful merges simplify reports without hiding meaningful variation.

Evaluate With Numbers And Quick Reads

Automated metrics help you compare settings. Topic coherence assesses whether the top words of a topic tend to co-occur with one another, while topic diversity gauges the frequency with which different topics reuse shared words. Separation scores, such as silhouette or density measures, give a sense of cluster distinctness.

Pair numbers with a simple human read. Sample several topics across sizes, inspect a few documents from each, and judge whether the label captures the theme. Agreement between metrics and eyeballs signals that tuning has reached a steady point.

Handle Short And Long Documents

Very short snippets can scatter because they share few tokens. Pool them by author, thread, or session to provide more context, or relax clustering thresholds so small yet coherent themes survive.

Very long documents can dominate with repeated terms. Split them into sections, embed the sections, cluster at the section level, and then map back to parent documents for reporting. These small adjustments give each text a fair voice in the clustering step.

Track Topics Over Time

Language and document sources change over time, and those shifts can reshape topic boundaries if they go unmonitored. BERTopic can compute topic frequencies by time bucket and update representations as the corpus grows. Decide on a refresh cadence that matches your use case, then version embeddings, reducer settings, and clustering parameters together so comparisons stay fair across months.

When a label appears to drift, check whether the underlying content changed or whether the model choice or tuning moved the decision boundary. Clear versioning turns trend charts into evidence rather than anecdotes.

Present Results Stakeholders Understand

People engage faster when labels are short and examples are visible. Show the top terms, a one line description you write after reviewing samples, and a few dated snippets that capture the theme.

An intertopic distance map helps readers see which themes sit close to one another. If teams will act on the results, include a short note that explains refresh cadence and how to request corrections when a label feels off. Clear presentation turns model output into shared context.

Watch Bias, Privacy, And Drift

Topic models mirror their inputs. If the corpus overrepresents certain groups or viewpoints, topics will reflect that skew. Mask private fields before modeling, and avoid labels that reveal sensitive traits unless the project explicitly requires them.

Revisit the source mix regularly, since a quiet shift in where documents come from can change the story even when code stays the same. Keep archives of prior runs so you can explain why a topic split, merged, or faded.

Production Habits That Save Time

Write down the exact encoder, preprocessing, UMAP settings, HDBSCAN parameters, and c-TF-IDF options for every run. Save cluster assignments with stable document ids so you can trace changes across versions.

Before any update, run a tiny smoke test on a frozen sample to check that labels have not shifted in surprising ways. Schedule refreshes during quiet hours and publish a short note that links model versions to visible shifts in topic counts. These habits keep the pipeline calm and the audience confident.

Conclusion

BERTopic combines strong embeddings, sensible clustering, and readable labels to make topic modeling practical for real datasets. Treat preprocessing as gentle support, select an encoder that matches the corpus, and tune UMAP and HDBSCAN with a few clear views rather than guesswork.

Build labels with class-based TF-IDF, merge near duplicates with care, and evaluate with both metrics and quick human reads. Version every step and refresh on a steady schedule so trends remain honest as data shifts. With those habits in place, topics stay coherent, explanations remain grounded, and teams gain a stable map of what people are writing about.