The rise of artificial intelligence (AI) significantly impacts cybersecurity, presenting both opportunities and challenges. While AI enhances threat detection and response, malicious actors also exploit it to launch sophisticated attacks. This dual-edged nature demands careful evaluation of AI's role, shaping the future of cybersecurity as either a powerful shield or an enabler of threats.

The Current Cybersecurity Landscape:

Cybersecurity is no longer just a technical concern—it is a strategic business imperative. From multinational corporations to small businesses, and from public infrastructure to private data networks, all are potential targets in today’s highly digitized world.

Modern cyber threats have evolved in complexity, often including:

- Phishing and spear-phishing attacks

- Ransomware and zero-day exploits

- Supply chain attacks

- Advanced persistent threats (APTs)

- Insider breaches and credential theft

These dynamic threats are quickly becoming too nimble to leap over the barriers represented by traditional security tools like firewalls, antivirus software and rule-based monitoring. AI brings flexibility, a thinking component to cybersecurity that can detect, take action and counter a threat much more effectively than a manual system.

AI as a Protective Shield: Enhancing Cyber Defense

AI’s contribution to cybersecurity is both proactive and reactive, allowing systems to anticipate attacks before they occur and respond faster when they do. Below are key ways in which AI fortifies cybersecurity defenses:

1. Real-Time Threat Detection and Prevention

In large networks, the system should be able to analyze network logs, how the system is used, and access records using AI-driven systems to identify odd activity and act as an indicator of a breach. Well-trained machine learning algorithms have the potential to discover patterns and anomalies that would otherwise have been overlooked by humans.

An example of this can be seen in the case of data downloads in the system that are done out of the ordinary or multiple logins into the system that are unusual in location, and AI identifies and fails the alerts to prevent the damage before it intensifies.

2. Behavioral Analysis and User Profiling

AI helps create comprehensive behavioral baselines for users and devices. By learning what is considered "normal," AI tools can flag subtle deviations such as:

- Logging into systems outside usual working hours

- Accessing unfamiliar databases

- Performing abnormal file transfers

These insights are particularly valuable in identifying insider threats or stolen credentials being used by external attackers.

3. Automation of Security Operations

Security teams often face alert fatigue—drowning in logs, false positives, and manual investigations. AI can automate and streamline routine tasks like:

- Log analysis

- Threat classification

- Initial triage and prioritization

- First-response remediation

This level of automation allows cybersecurity teams to focus on strategic decision-making and address the most critical threats without delay.

4. Strengthening Email and Endpoint Security

AI filters phishing emails more effectively by analyzing not just keywords, but contextual clues, tone, and sender behavior. It adapts over time, learning how phishing techniques evolve. On the endpoint side, AI can quickly identify malicious processes, quarantine infected files, and prevent malware propagation within seconds.

AI as a Threat Amplifier: A Tool in the Wrong Hands

While AI has brought significant advances in defense, it has also become an asset for malicious actors. The same features that make AI effective for defenders—speed, scalability, and adaptability—can be exploited by cybercriminals to carry out more efficient and damaging attacks.

1. AI-Enhanced Malware

Adversaries are leveraging AI to develop malware that adapts on the fly, avoiding detection and increasing impact. These AI-powered threats can:

- Evade endpoint security

- Rewrite their code (polymorphism)

- Mimic legitimate system processes

- Alter delivery methods based on environmental cues

Such malware is particularly dangerous because it is harder to detect using conventional signature-based tools.

2. Social Engineering at Scale: The Rise of Deepfakes

Social engineering attacks have evolved beyond simple deceptive emails. AI-generated voice or video impersonations—known as deepfakes—are now used to impersonate executives or key decision-makers, convincing employees to transfer funds or reveal confidential information.

The increasing realism of such content makes detection difficult, leaving organizations exposed to fraud, identity manipulation, and data breaches.

3. AI-Driven Attack Automation

Cybercriminals use AI to accelerate the reconnaissance process, identify vulnerabilities in systems, and craft highly customized attacks. AI-based tools can analyze an organization's digital footprint and select the most effective attack vectors with little to no human input.

The result: more sophisticated attacks executed at scale, targeting victims with greater precision and success rates.

Managing the Double-Edged Sword:

To leverage AI's benefits while minimizing its risks, organizations must implement robust governance, transparency, and human oversight. Here are critical strategies for managing AI in cybersecurity:

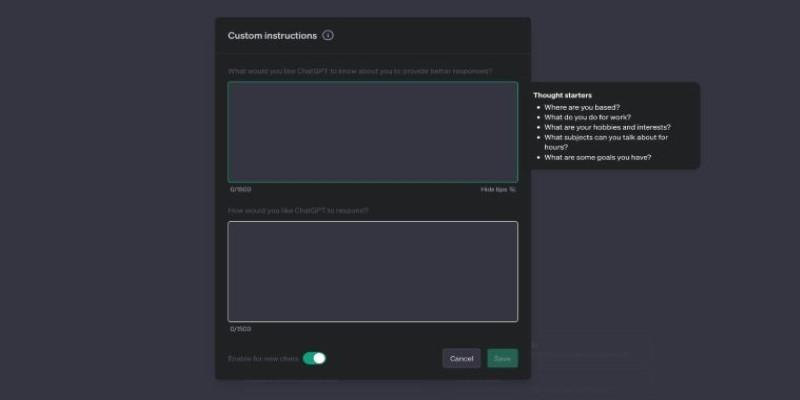

1. Emphasizing Explainable AI (XAI)

Explainable AI enables stakeholders to understand how AI systems make decisions. This transparency is crucial for:

- Auditability and compliance

- Identifying bias in detection models

- Improving user trust in automated tools

By making AI’s decision-making process clear, organizations can better assess its effectiveness and ethical alignment.

2. Developing Internal AI Security Policies

Organizations must create dedicated frameworks governing how AI tools are selected, trained, and deployed in security environments. These should cover:

- Data privacy standards

- Model validation procedures

- Incident response protocols involving AI

- Regular retraining to reflect new threat patterns

Such policies help organizations ensure that AI usage aligns with both legal requirements and organizational risk tolerance.

3. Fostering human collaboration

AI should augment—not replace—human analysts. While AI can process vast amounts of data and flag threats quickly, human judgment is essential for nuanced interpretation, ethical decisions, and handling ambiguous situations.

Cybersecurity teams should be trained to work alongside AI systems, leveraging them as decision-support tools rather than autonomous agents.

Real-World Impact: AI in Action

Numerous organizations are already reaping the benefits of AI-powered cybersecurity:

- Banking institutions use AI to detect fraudulent activities across millions of transactions in real time.

- Telecommunication companies deploy AI to identify network anomalies and prevent service disruption.

- Government agencies leverage AI to protect national infrastructure from targeted cyber threats and intrusions.

These success stories highlight AI’s capacity to scale security efforts, reduce incident response times, and improve threat visibility.

Challenges Ahead: Preparing for the Future

Despite its promise, the integration of AI into cybersecurity is not without challenges:

- Data Dependency: AI is only as reliable as the data it learns from. Poor or biased datasets can lead to flawed detection models.

- High Implementation Costs: Advanced AI systems can be expensive, putting them out of reach for smaller organizations.

- Lack of Skilled Personnel: There is a growing need for cybersecurity professionals with AI and machine learning expertise.

- Ethical Concerns: Misuse, lack of oversight, and algorithmic bias remain major areas of concern.

Addressing these issues requires a unified effort from developers, policymakers, regulators, and security professionals to ensure AI remains a force for good.

Conclusion:

Artificial Intelligence stands at the center of a transformative era in cybersecurity. It has proven its potential as a protective shield, capable of defending against ever-evolving threats with precision and agility. However, in the wrong hands, AI also becomes a threat amplifier, increasing the speed, scale, and impact of malicious activity. The answer to the question: shield or amplifier?—ultimately depends on how AI is used, who controls it, and whether sufficient guardrails are in place.